Now that the OMS has been moved and configured there is one thing left: Making sure all agents are pointing the the new OMS. The targets from the central agent on the monitoring server itself need to be moved to the new management agent on the new server.

Configure the management agent

Configure the management agent, which was created during the OMS 12c Software Only install, on the new server by executing the following command:

$ ./agentDeploy.sh AGENT_BASE_DIR=/u01/app/agent12c \

AGENT_INSTANCE_HOME=/u01/app/agent12c/agent_inst \

AGENT_PORT=3872 -configOnly OMS_HOST=oramon.isd.org \

EM_UPLOAD_PORT=4903 AGENT_REGISTRATION_PASSWORD=<password>

The output of the command…

03 AGENT_REGISTRATION_PASSWORD=<agent_password>tDeploy.sh AGENT_BASE_DIR=/u01/app/agent12c AGENT_INSTANCE_HOME=/u01/app/agent12c/agent_inst AGENT_PORT=3872 -configOnly OMS_HOST=<hostname> EM_UPLOAD_PORT=49

Executing command : /u01/app/agent12c/core/12.1.0.2.0/jdk/bin/java -classpath /u01/app/agent12c/core/12.1.0.2.0/oui/jlib/OraInstaller.jar:/u01/app/agent12c/core/12.1.0.2.0/oui/jlib/xmlparserv2.jar:/u01/app/agent12c/core/12.1.0.2.0/oui/jlib/srvm.jar:/u01/app/agent12c/core/12.1.0.2.0/oui/jlib/emCfg.jar:/u01/app/agent12c/core/12.1.0.2.0/jlib/agentInstaller.jar:/u01/app/agent12c/core/12.1.0.2.0/oui/jlib/share.jar oracle.sysman.agent.installer.AgentInstaller /u01/app/agent12c/core/12.1.0.2.0 /u01/app/agent12c/core/12.1.0.2.0/sysman/install /u01/app/agent12c /u01/app/agent12c/agent_inst AGENT_BASE_DIR=/u01/app/agent12c AGENT_BASE_DIR=/u01/app/agent12c AGENT_INSTANCE_HOME=/u01/app/agent12c/agent_inst AGENT_PORT=3872 -configOnly OMS_HOST=oramon.isd.org EM_UPLOAD_PORT=4903 AGENT_REGISTRATION_PASSWORD=<agent_password>

Performing the agent configuration…

Executing command: /u01/app/agent12c/core/12.1.0.2.0/oui/bin/runConfig.sh ORACLE_HOME=/u01/app/agent12c/core/12.1.0.2.0 RESPONSE_FILE=/u01/app/agent12c/core/12.1.0.2.0/agent.rsp ACTION=configure MODE=perform COMPONENT_XML={oracle.sysman.top.agent.11_1_0_1_0.xml} RERUN=true

Configuration Log Location:/u01/app/agent12c/core/12.1.0.2.0/cfgtoollogs/cfgfw/CfmLogger<timestamp>.log

Now run the root.sh script

sudo /oracle/oem/agent12c/core/12.1.0.3.0/root.sh

Re-secure all agents

Re-secure all agents on all nodes.

emctl secure agent -emdWalletSrcUrl "https://<new server>:4903/em"

...

...

emctl start agent

...

emctl status agent

Make sure the Repository URL is pointing to the new location, the ‘Last successful upload’ has a time stamp and the final message is: ‘Agent is running and Ready‘:

Repository URL : https://<new server>:4903/empbs/upload

…

Last successful upload : 2014-04-07 11:12:13

—————————————————————

Agent is Running and Ready

Relocate the oracle_emrep target

Next step is to relocate the oracle_emrep target to the new OMS host. This part failed in a previous step because the old OMS was not running at the time.

So, on the old OMS, start the management agent before continuing with the next step.

emctl start agent

emctl secure agent -emdWalletSrcUrl "http://oramon.isd.org:4890/em"

On the new server log in to emcli

$ emcli login -username=sysman

Error: Already logged in as user "sysman". Use "emcli logout" to logout the current user.

$ emcli sync

Synchronized successfully

$ emctl config emrep -agent <new server>:3872

Oracle Enterprise Manager Cloud Control 12c Release 2

Copyright (c) 1996, 2012 Oracle Corporation. All rights reserved.

Please enter repository password:

Enter password :

Login successful

Moved all targets from oramon.lrk.org:3872 to oramon.isd.org:3872

Command completed successfully!

Enter password :

Login successful

Moved all targets from oramon.lrk.org:3872 to oramon.isd.org:3872

Command completed successfully!

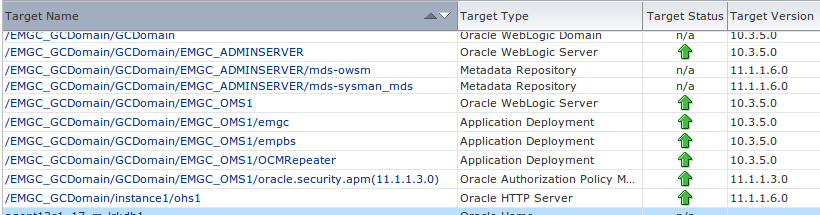

Move all WebLogic targets to the new management agent

Login to the OEM GUI running on the new server and navigate to the WebLogic Domain target for the Cloud Control domain. In the Target Setup -> Monitoring Credentials section, update the Administration server host value to the new server name, then hit OK. Then execute a Refresh WebLogic Domain, selecting Add/Update Targets, to move all WebLogic targets to the new central agent.

Move all WebLogic agent targets to the new management agent

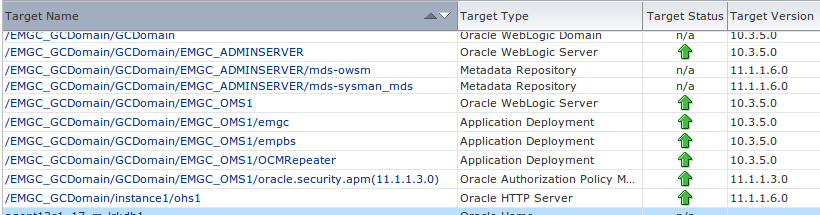

We noticed that after the previous step still some targets where listed on the old OMS agent. On the old OMS:

$ emctl config agent listtargets

Oracle Enterprise Manager Cloud Control 12c Release 2

Copyright (c) 1996, 2012 Oracle Corporation. All rights reserved.

[<old server>, host]

[<old server>:3872, oracle_emd]

[oms12c1_3_oramon, oracle_home]

[EMGC_GCDomain, oracle_ias_farm]

[WebLogicServer10_3_5_0_oramon_9552, oracle_home]

[webtier12c1_22_oramon, oracle_home]

[agent12c1_9_oramon, oracle_home]

[OraDb11g_home1_1_oramon, oracle_home]

[OraDB12Home1_29_oramon, oracle_home]

[/EMGC_GCDomain/GCDomain/EMGC_OMS1/OCMRepeater, j2ee_application]

[/EMGC_GCDomain/GCDomain/EMGC_OMS1/emgc, j2ee_application]

[/EMGC_GCDomain/GCDomain/EMGC_OMS1/empbs, j2ee_application]

[/EMGC_GCDomain/GCDomain/EMGC_ADMINSERVER/mds-owsm, metadata_repository]

[/EMGC_GCDomain/GCDomain/EMGC_ADMINSERVER/mds-sysman_mds, metadata_repository]

[/EMGC_GCDomain/instance1/ohs1, oracle_apache]

[/EMGC_GCDomain/GCDomain/EMGC_OMS1/oracle.security.apm(11.1.1.3.0), oracle_apm]

[/EMGC_GCDomain/GCDomain, weblogic_domain]

[/EMGC_GCDomain/GCDomain/EMGC_ADMINSERVER, weblogic_j2eeserver]

[/EMGC_GCDomain/GCDomain/EMGC_OMS1, weblogic_j2eeserver]

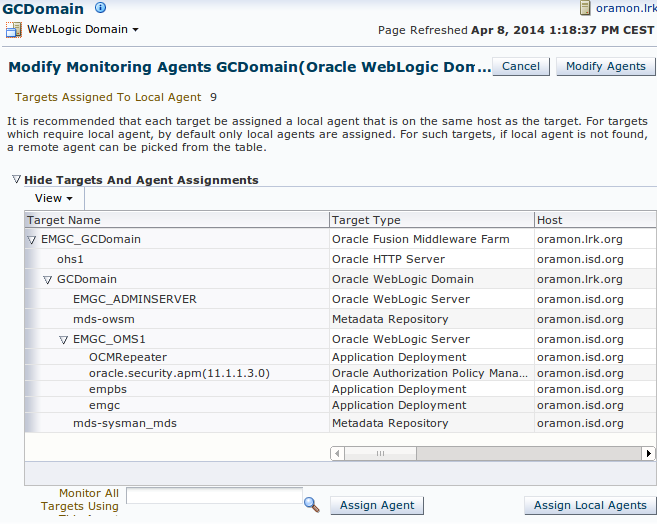

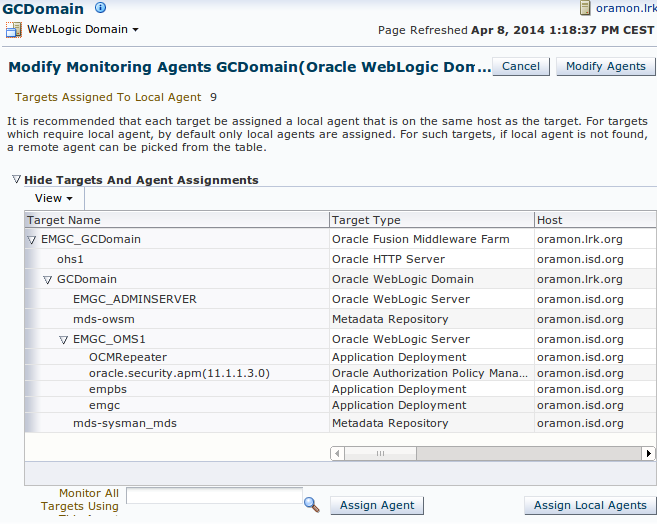

To fix this we opened the GCDOMAIN (Weblogic Domain) target [/EMGC_GCDomain/GCDomain/]

– [Weblogic Domain] – [Target Setup] – [Modify Agents] – [Continue]

– [Monitor All Targets Using This Agent] – [Select correct agent host] – [Modify Agents]

Move remaining Weblogic targets

Some weblogic targets still remained on the old agent running on the old OMS server:

$ emctl config agent listtargets

Oracle Enterprise Manager Cloud Control 12c Release 2

Copyright (c) 1996, 2012 Oracle Corporation. All rights reserved.

[<old server>, host]

[<old server>:3872, oracle_emd]

[WebLogicServer10_3_5_0_oramon_9552, oracle_home]

[webtier12c1_22_oramon, oracle_home]

We will relocate them manually with emcli relocate_targets on the new OMS server:

$ emcli relocate_targets \

-src_agent=<old server>:3872 \

-dest_agent=<new server>:3872 \

-target_name=webtier12c1_22_oramon

-target_type=oracle_home \

-copy_from_src -force=yes

Moved all targets from <old server>:3872 to <new server>:3872

$ emcli relocate_targets \

-src_agent=<old server>:3872 \

-dest_agent=<new server>:3872 \

-target_name= WebLogicServer10_3_5_0_oramon_9552

-target_type=oracle_home \

-copy_from_src -force=yes

Moved all targets from <old server>:3872 to <new server>:3872

Now check on the old server if the are still any Weblogic targets left that should be present on the new server.

Update EM Console Service

I have only one target showing down at this point, the EM Console Service. Go to the target, and click on the Monitoring Configuration tab. Click on Service Tests and Beacons. Select the EM Console Service Test, and click the Edit button. Make sure you have the “Access Login page” step selected, and click Edit. Change the URL to reflect your new OEM server, and save the changes.

Update Management Services and Repository

Go to target Management Services and Repository

– [OMS and Repository] – [Target Setup] – [Monitoring Configuration] –

– Change [ Repository Connect Descriptor ]

(DESCRIPTION=(ADDRESS_LIST=(ADDRESS=(PROTOCOL=TCP)(HOST=oramon.lrk.org)

(PORT=1521)))(CONNECT_DATA=(SID=CCON1)))

to

(DESCRIPTION=(ADDRESS_LIST=(ADDRESS=(PROTOCOL=TCP)(HOST=oramon.isd.org)

(PORT=1521)))(CONNECT_DATA=(SID=CCON1)))

Fix targets in Status Pending

OEM12c has a great feature that allows you to resynch the agent via OEM. Here are the steps:

-

Go to Setup –> Manage Cloud Control –> Agents;

-

Click on the testdrv01 agent;

-

On the drop down menu from Agent, choose Resynchronization;

-

Be sure to select “Unblock agent on successful completion of agent resynchronization”.

The next step is probably unsupported and perhaps not necessary for your configuration, but it fixed our last issues!

Replaced <new server> to <old server> in :

/u01/app/agent12c/agent_inst/sysman/emd/targets.xml

/u01/app/mware/gc_inst/user_projects/domains/GCDomain/config/config.xml

/u01/app/mware/gc_inst/user_projects/domains/GCDomain/init-info/startscript.xml

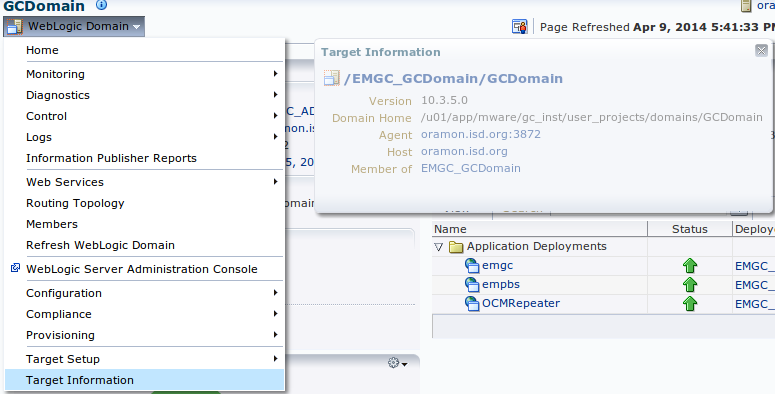

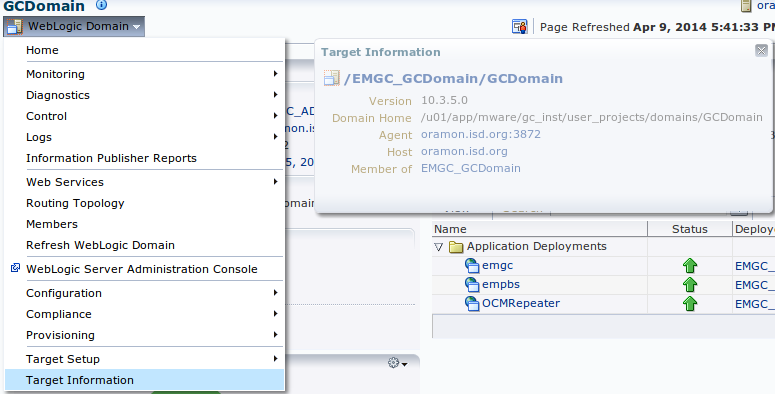

Update host for Weblogic Domain and IAS Farm

The next step is probably unsupported and perhaps not necessary for your configuration, but it fixed our last issues!

Targets Oracle Weblogic Domain and Oracle Fusion Middleware Farm still have the old host configured in [Target Information]. Not knowing how the fix this with the tools we decided to update the repository:

Connect as SYSMAN to the repository database:

update "sysman"."em_manageable_entities" set host_name = 'oramon.isd.org'

where entity_type = 'weblogic_domain'

and entity_name = '/EMGC_GCDomain/GCDomain';

update "sysman"."em_manageable_entities" set host_name = 'oramon.isd.org'

where entity_type = 'oracle_ias_farm'

and entity_name = 'EMGC_GCDomain';

After the update both Agent and Host refer to the new OMS server oramon.isd.org:

From this point our Enterprise Manager 12c installation is now completely configured and running on our new monitoring server.